Across enterprises, a quiet contradiction is unfolding. Employees are expected to move faster, think smarter, and produce more, yet the very tools that enable this transformation, AI systems, are often blocked at the organizational level. Concerns around data leakage, compliance, and intellectual property have led many companies to restrict access to public AI platforms entirely.

And yet, the demand for AI has not disappeared. It has gone underground.

This tension has given rise to a new category of infrastructure led solutions such as Sanctorum, which promise the power of AI without compromising security, compliance, or control. At the center of this shift is a concept gaining rapid traction: sovereign AI.

The Hidden Reality of Shadow AI

When companies block tools like ChatGPT or other generative AI platforms, employees rarely stop using them. Instead, they turn to personal devices, copy paste sensitive data, or rely on unapproved workflows. This phenomenon, often referred to as shadow AI, is rapidly becoming a major enterprise risk.

A 2023 study in MIT Sloan Management Review found that a significant percentage of employees admit to using unauthorized digital tools to improve productivity. In the context of AI, this behavior introduces serious vulnerabilities, including data exposure, regulatory violations, and loss of intellectual property.

Blocking AI does not eliminate risk. It redistributes it into less visible and less controllable channels.

Why Enterprises Are Saying No to AI

Enterprise resistance to AI adoption is not irrational. It is driven by legitimate concerns.

Data privacy regulations such as GDPR and India’s Digital Personal Data Protection Act impose strict requirements on how data is processed and stored. Sending sensitive company information to external AI APIs can create compliance violations.

There is also the issue of data ownership. Many organizations are wary of feeding proprietary information into third party models where usage and retention policies are unclear.

Security teams must also consider the risk of adversarial attacks, prompt injection, and model exploitation. Research published in IEEE Security and Privacy 2023 highlights the growing attack surface introduced by generative AI systems.

In this context, blocking AI tools is often seen as the safest immediate response.

Enter Sanctorum and the Rise of Sovereign AI

Sanctorum represents a new approach. Instead of denying access to AI, it brings AI inside the enterprise boundary.

Sovereign AI infrastructure allows organizations to deploy and operate AI systems within their own controlled environments. This can include on premise GPU clusters, private cloud deployments, or edge based systems that ensure data never leaves the organization.

With this model, companies gain full control over data flows, model behavior, and compliance mechanisms. Sensitive information remains internal, while employees still benefit from AI driven productivity.

Research in Nature Communications 2022 on federated learning supports this approach, showing that decentralized AI systems can achieve near equivalent performance to centralized models while preserving data privacy.

Sanctorum builds on these principles by offering a secure, enterprise ready AI layer that aligns with governance requirements.

How Sovereign AI Changes the Equation

Sovereign AI transforms AI adoption from a risk into a controlled capability.

Instead of relying on external APIs, organizations deploy internal models that can be audited, monitored, and fine tuned. Access controls ensure that only authorized users and systems interact with sensitive data.

Observability tools track model behavior, detect anomalies, and enforce compliance policies in real time.

This approach also enables customization. Models can be trained on domain specific data, improving relevance and performance while maintaining confidentiality.

A 2024 report in Harvard Business Review notes that organizations with strong AI governance frameworks are significantly more successful in scaling AI initiatives without increasing risk exposure.

From Restriction to Enablement

The shift from blocking AI to enabling it securely represents a broader transformation in enterprise thinking.

Instead of asking how to prevent AI usage, forward looking organizations are asking how to channel it safely and effectively.

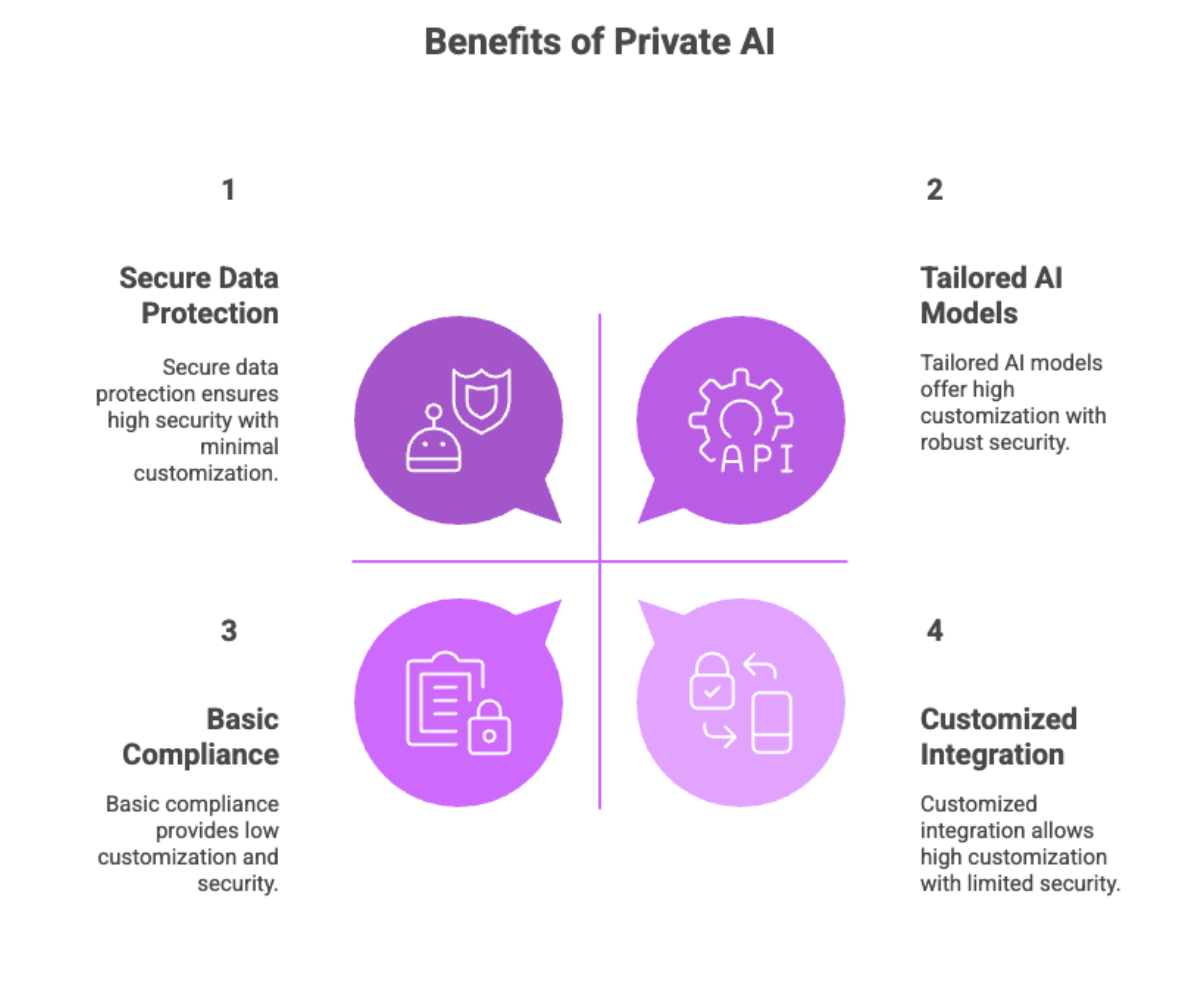

Sanctorum and similar sovereign AI solutions make this possible by aligning three critical dimensions: productivity, security, and compliance.

Employees gain access to powerful tools that enhance their capabilities. Organizations retain control over their data and systems. Risk is managed proactively rather than reactively.

Final Insight

The question is no longer whether employees will use AI. They already are. The real question is whether organizations will choose to control and benefit from that usage, or continue pushing it into the shadows.

Sovereign AI offers a path forward. By bringing intelligence inside the enterprise boundary, companies can unlock the full potential of AI without compromising on trust, security, or compliance. In doing so, they move from a posture of restriction to one of strategic enablement, where AI becomes not a liability, but a core competitive advantage.